This is a follow up to the SocketCAN proposal discussion for the NuttX RTOS.

SocketCAN is allowing the CAN controllers to be addressed through the network socket layer (see wikipedia picture below - SocketCAN - Wikipedia).

The Nuttx architecture already supports the socket interface for networking, so a SocketCAN driver should nicely fit.

The UAVCAN library already supports the SocketCAN interface, so it can easily be used in Linux systems.

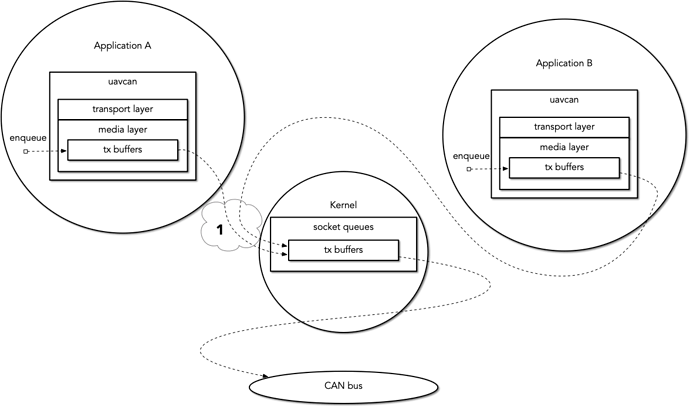

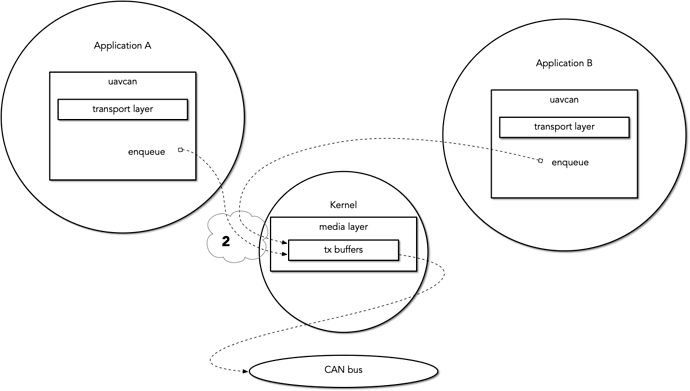

So an architecture wise nice solution for adding UAVCAN to the S32K14x chips would be to:

1. Create a CAN controller driver for the S32K14x

2. Create a SocketCAN driver which would be benificial for all Nuttx targets.

We’ve received feedback from Pavel

I concur with the general idea that SocketCAN in Linux may be suboptimal for time-deterministic systems. At the same time, as far as I understand SocketCAN, the limitations are not due to its fundamental design but rather due to its Linux-specific implementation details. I admit that I did not dig deep but my initial understanding is that it should be possible to design a sensible API in the spirit of SocketCAN that would meet the design objectives I linked in this thread earlier.

https://forum.opencyphal.org/t/queue-disciplines-and-linux-socketcan/548

Based on all the feedback, I decided to create a testbed running SocketCAN on a microcontroller. That’s when I found Zephyr RTOS which is partly posix compatible and provides a SocketCAN implementation.

The testbed consists of:

- NXP FRDM-K64F board with

- NXP DEVKIT-COMM CAN tranceiver.

- Zephyr RTOS

- CAN bus bitrate 1Mhz

- PC sending a 8 byte CAN frame every 100ms

When the interrupt occurs the GPIO pin will be pulled up and when the userspace application receives the CAN frame the GPIO will be pulled down. which be shown by the yellow line, the pink line is the can frame.

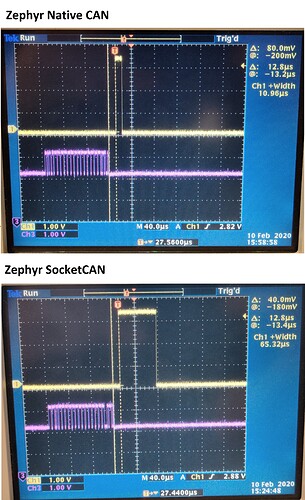

1st Measurement Zephyr Native CAN implementation

Time from frame to interrupt is 12.8us

Time from interrupt to user space copy 10.85us

On my oscilloscope I don’t see variance in the “interrupt to user space copy” therefore jitter < 0.001us

2nd Measurement Zephyr SocketCAN implementation

Time from frame to interrupt is 12.8us

Time from interrupt to user space copy 65.32us

On my oscilloscope I don’t see variance in the “interrupt to user space copy” therefore jitter < 0.001us

Testbed conclusion

- Zephyr SocketCAN does increase the latency from interrupt to userspace by 54.47us, is this acceptable? I don’t know, furthermore I didn’t look in the specifics of the Zephyr network stack, fine tuning can be achieved.

- Zephyr SocketCAN seems to be deterministic and doesn’t increase jitter which is good realtime behavior.

Most of the points discussed in RTOS CAN API requirements can be covered by the SocketCAN api.

- Received/Transmitted frames shall be accurately timestamped. This can be achieved by adding a socket options which will enable this behavior.

- Transmit frames that could not be transmitted by the specified deadline shall be automatically discarded by the driver. Can also be achieved by a socket option, or an ioctl if libuavcan wants full control.

- Avoidance of the inner priority inversion problem I didn’t look into the specifics of the Zephyr CAN implementation but I assume a correct implementation will avoid this problem.

- SocketCAN allows easy porting of posix applications to an RTOS, I do have internal port of libuavcan (master branch) to Zephyr which was fairly simple. It’s working on the FRDM-K64F board but I have to look for better tooling to measure realtime performance of the full stack.

If the UAVCAN team can provide feedback on these results that would be great.