Why?

In 2020, arguably the most critical piece of ROS is the software ecosystem built around it. The existing software is the reason why ROS2 is being designed the way it is, with the built-in means of backward compatibility and the old IDL kept intact.

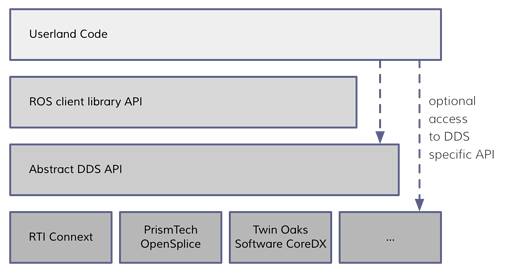

The new ROS2 is intended to stretch the applicability limits of ROS1 in several dimensions, including production systems as opposed to research/lab projects, systems with mild real-time requirements, and systems with some degree of certified functional safety. Depending on how you squint, ROS2 can be seen as a unified abstraction layer built on top of existing off-the-shelf technologies (such as DDS) intended to bridge the ROS software ecosystem with field-proven narrowly specialized solutions instead of homegrown alternatives. The context is introduced in the short articles “Why ROS 2?” and “Stories driving the development”.

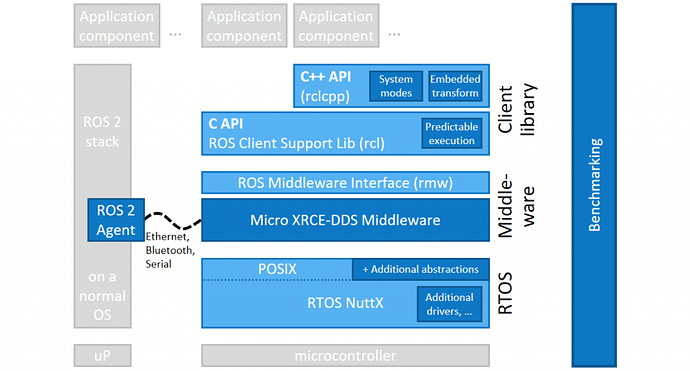

I propose an exploratory study to determine if the ROS ecosystem could theoretically be bridged with high-integrity hard real-time systems, where resource-constrained hard real-time baremetal MCU applications are first-class participants, by using UAVCAN as the underlying communication platform. In the following diagram, the two bottom layers are to be swapped out by UAVCAN (one of the open-source implementation libraries):

How?

There are enough conceptual similarities between the existing ROS communication platforms and UAVCAN to make much of the objectives of this experiment self-evident. The corner cases require some elaboration, though. The description that follows is the very first idea that comes to mind, and as such, it may be suboptimal or even infeasible – further brainstorming is necessary.

UAVCAN Subjects map closely to ROS topics, with the crucial difference that UAVCAN does not offer named topics and does not support namespacing, offering a much simpler and much less capable method of segregating topics (“subjects” in UAVCAN parlance) by numerical identifiers – subject-IDs. UAVCAN services and ROS services, despite their apparent similarity, are far from being equivalent even if you ignore the fact that UAVCAN, as in the case of subjects, offers neither named entities nor namespaces, replacing them with simple numerical IDs as well. This experiment will not attempt to bridge ROS services with UAVCAN services, using UAVCAN subjects for their emulation instead, much like it is described in the article “ROS on DDS”.

The lack of the required topic (subject) differentiation capabilities in UAVCAN can be addressed by a robust decentralized registry that implements the mapping from ROS topic/service names to UAVCAN subject-IDs. Building upon the well-explored algorithm of Raft Consensus, which is already used in UAVCAN for decentralized plug-and-play node-ID allocation, the registry can be implemented as a distributed append-only log of entries, where each entry contains the name of the ROS topic/service and the corresponding UAVCAN subject-ID (or a pair thereof in the case of ROS services). The “append-only” constraint can be lifted later (it requires marginally more complex behaviors in Raft). Let the set of nodes that maintain the shared registry be referred to as “decentralized ROS master(s)”.

A vital design objective that should be considered is the seamless collaboration of nodes that are part of the ROS ecosystem (built on top of the ROS API) and regular UAVCAN nodes that know nothing about ROS and are merely designed following the recommended practices as advised by the UAVCAN specification/guide. One question that arises here is the integration of such regular nodes with the ROS computation graph. Native UAVCAN nodes use subject-IDs configured via the Register API using reserved register name patterns; for example, a register named uavcan.pub.bms_status would define the subject-ID of BMS status messages published by a given node. In a typical UAVCAN application, a newly integrated node is to be configured once to set up the subject-IDs to use for every relevant function of the node. This is similar to the typical workflow of roslaunch (at least this is true for ROS1; in ROS2, the capabilities of Launch are far more extensive, but we are only interested in the trivial cases so far because this is a mere exploration/PoC).

What I suggest is to make the decentralized ROS master responsible for establishing the correct configuration of every node in the network by writing the correct subject-ID register values in accordance with the namespace in which the node resides. Expanding upon the above example, if the BMS node is launched in the ROS namespace /foo/bar/, it will automatically populate a topic /foo/bar/bms_status, where bms_status comes from the register named uavcan.pub.bms_status. The specific subject-ID values are to be chosen by the decentralized ROS master automatically, which are then committed to the shared log described above.

In the case of ROS graph resource remapping, the mapping information is to be stored on the decentralized ROS master alongside the topic-subject-ID log. I will skip the detailed description here because this post is not a design specification. Similar principles apply to ROS service and ROS configuration parameter mappings.

In short, what I am describing is a regular UAVCAN bus amended with certain special capabilities provided by the decentralized ROS master nodes. It is vital that we do not alter the conventional design approaches of regular ROS nodes or regular UAVCAN nodes, otherwise the value of the resulting architecture would be greatly diminished. A UAVCAN node that is freshly integrated into a new system does not need to know whether its registers are being written by a human integrator or by the decentralized ROS master – the observable behavior is invariant to that. The same holds for ROS nodes – when launching a new node or making a subscription, the ROS user does not need to know that the action translates to sending certain requests to the decentralized ROS master.

I think this is a relevant and interesting exercise that can be performed by a motivated developer in a conceivable amount of time single-handedly. I think the best starting point is to define the logic and the UAVCAN service interface for the aforementioned decentralized ROS master nodes and implement that on top of PyUAVCAN. We will be looking for collaboration partners willing to take part and drive this experiment.